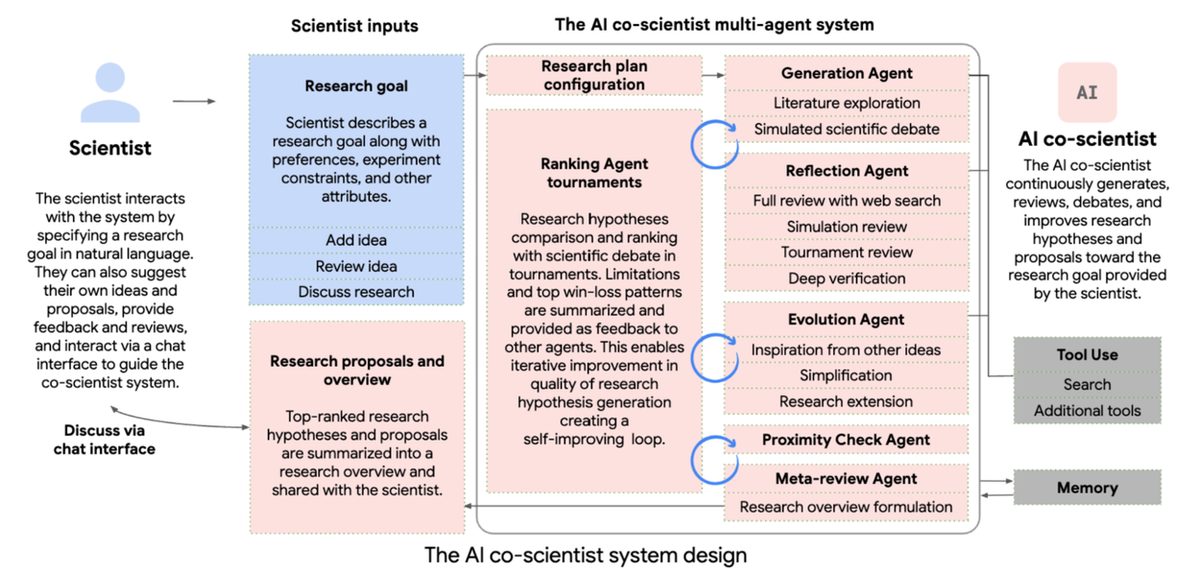

AI hypothesis generation becomes standard lab infrastructure within three years

As more validated results are published and the Trusted Tester Program expands, AI hypothesis generation tools become as routine in research labs as statistical software. Google, OpenAI, and Microsoft compete on accuracy and domain breadth. The bottleneck in science shifts decisively from idea generation to experimental validation capacity, accelerating discovery timelines in drug development and materials science by years. Funding agencies begin requiring AI-assisted literature reviews in grant applications.