Ambrose Bierce

Fictional AI pastiche — not real quote.

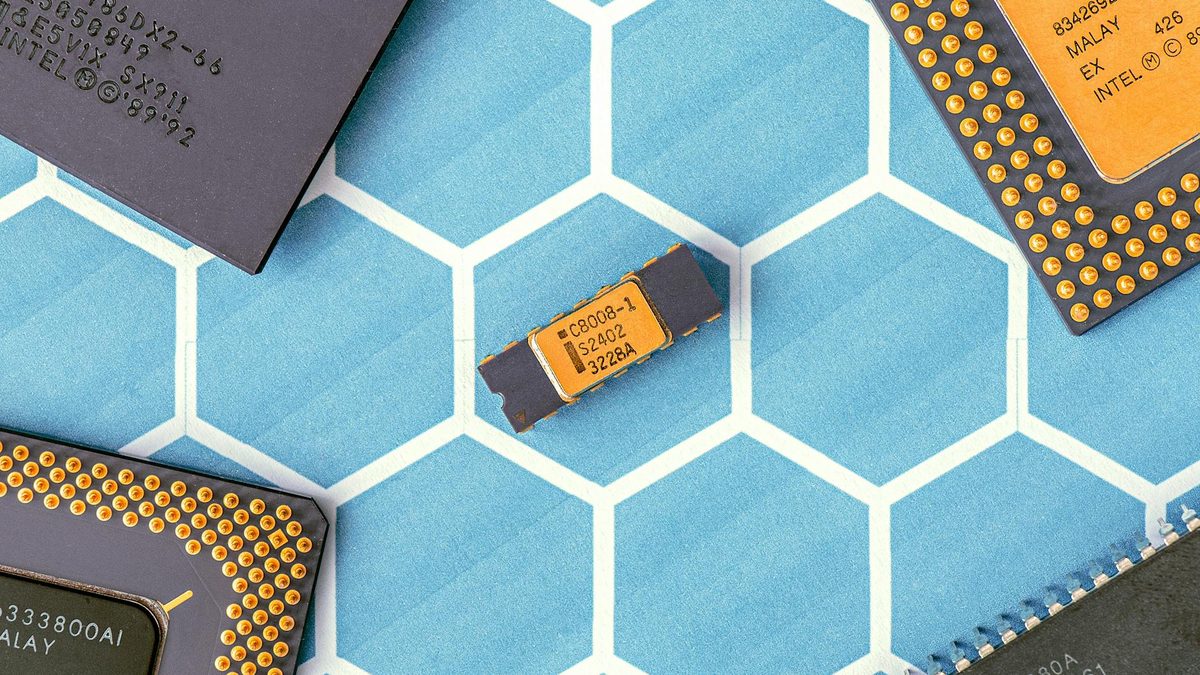

"Nine-figure fortunes staked on the certainty that the king of a hill will presently be displaced — this is not investment but rather the ancient ritual of ambitious men paying to watch other ambitious men fail. That four in five chips bear one maker's mark is called a monopoly by the envious and an ecosystem by the beneficiary; that investors now wager two hundred millions on the word "inference" suggests they have mastered the vocabulary of the future without troubling themselves to understand it."