Intel's Itanium and the x86 disruption that wasn't (2001-2012)

2001-2012What Happened

Intel launched Itanium in 2001 as a clean-break replacement for x86 processors, expecting enterprise customers to migrate to the new architecture. AMD responded with x86-64, extending the existing architecture to handle 64-bit workloads without requiring customers to rewrite their software. Itanium ultimately sold fewer than one million units while x86-64 became the industry standard.

Outcome

Intel spent billions developing Itanium while AMD captured server market share by maintaining backward compatibility.

The episode demonstrated that software ecosystem lock-in matters more than raw hardware performance—customers chose the architecture that preserved their existing code investment.

Why It's Relevant Today

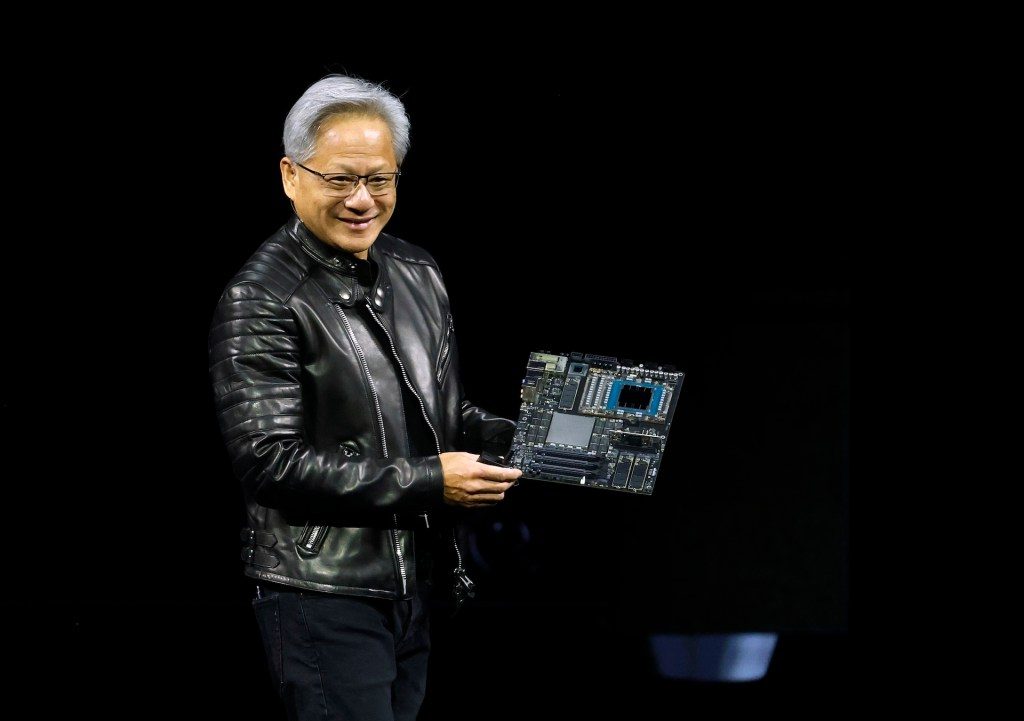

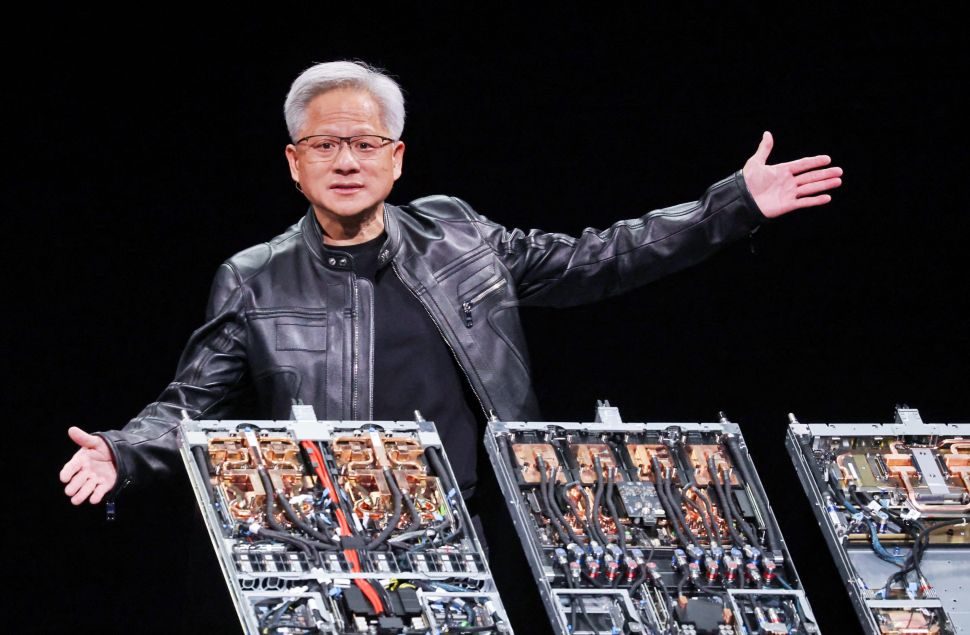

Nvidia's CUDA ecosystem and now NemoClaw follow the same logic: performance leadership matters, but the software layer that keeps developers from switching may be the more durable competitive advantage against custom ASICs.