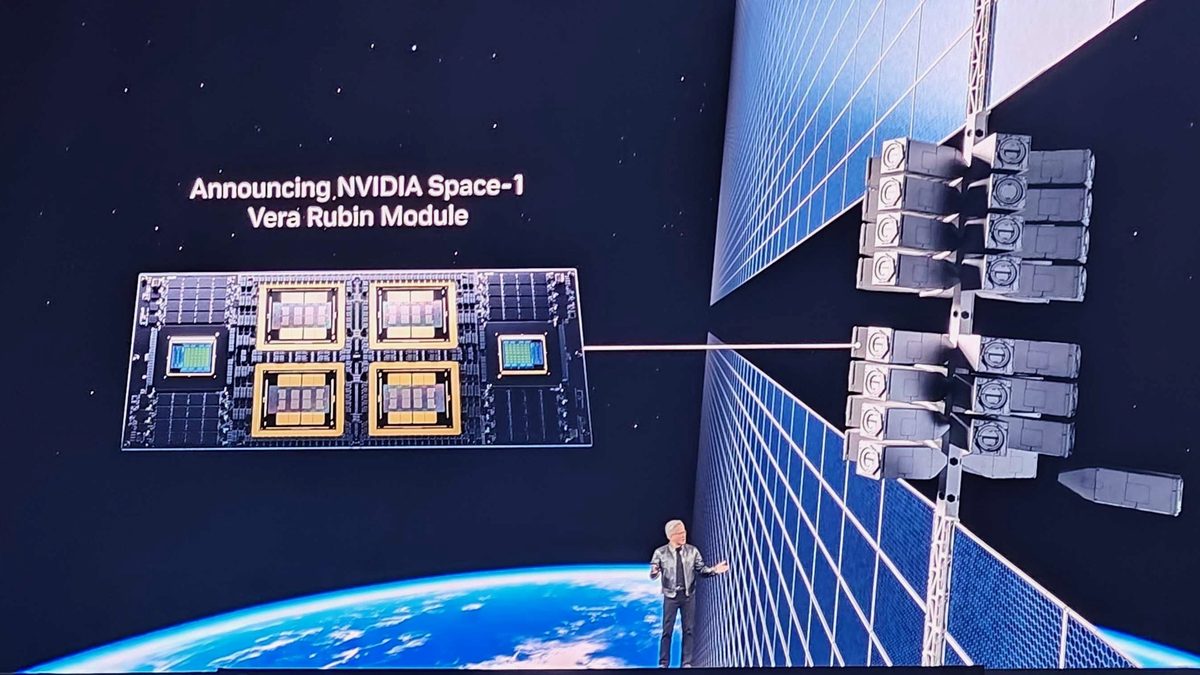

Earth observation satellites generate petabytes of imagery every day, but only about two percent of it ever reaches the ground. The bottleneck is physics: a satellite in low Earth orbit gets maybe ten minutes of ground-station contact per pass, and radio bandwidth cannot keep up with sensor resolution that doubles every few years. Nvidia's answer, announced at its annual developer conference on March 17, 2026, is to stop trying to move the data down and instead move the AI up. The Vera Rubin Space-1 Module packs 25 times the compute power of Nvidia's H100 chip into a radiation-hardened package designed to run large language models and foundation models directly in orbit.

This is not a concept paper. Starcloud already flew an H100 in space last November and trained the first AI model in orbit. Axiom Space launched two orbital data center nodes in January. Kepler Communications has 40 Nvidia processors distributed across 10 satellites right now. But Nvidia is not alone in this race. SpaceX filed with federal regulators in January 2026 for a constellation of up to one million satellites that could serve as orbital compute nodes, and Google announced Project Suncatcher to put its own AI chips in orbit. The question is no longer whether computing moves to space — it is who will control the infrastructure when it does.